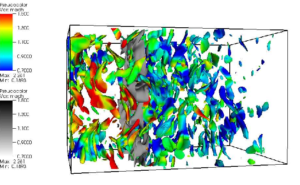

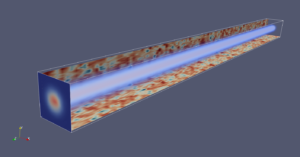

The characteristics of electromagnetic waves are considerably affected when they propagate through a turbulent medium. For example, small variations in temperature or density lead to fluctuations in refractive index that in turn perturbs the phase and amplitude of the propagating wave. This optical turbulence can significantly distort the final wave front and result in degrading effects such as beam spreading, scintillation and jitter. Some of these effects can be characterized by optical path differences which has, thus, been studied extensively in different flows such as shear layers, wakes and turbulent boundary layers. These effects

are also consequential in long-range laser communication systems. Understanding these effects is important for fundamental and practical reasons. For example, if the characteristics of the turbulence are known, then one can predict, and thus compensate for, the distortion of the wavefront. Conversely one can also use the information from the aberrated wave front to characterize both the medium and the inhomogeneities encountered along the path.

At TACL we use theory and highly resolved numerical simulations of both turbulence and optical phenomena to gain better fundamental understanding of laser distortion as well as develop models for better prediction and correction. Here are a few recent results in paper and paper.